Alternative to OpenClaw: Personal Assistant with Claude Code and MCP After Anthropic's April 2026 Cut

By the Kiwop team · Digital agency specialized in Software Development and Applied Artificial Intelligence · Published on April 19, 2026 · Last updated: April 19, 2026

TL;DR — On April 4, 2026, Anthropic disabled the OAuth tokens that allowed OpenClaw to use Claude Max and Claude Pro subscriptions. Affected users have three options: pay "Extra Usage" at API pricing (between 5 and 50 times more expensive), migrate to OpenAI Codex, or rebuild the personal assistant on top of Claude Code + MCP. At Kiwop we chose the third path, and we document here the resulting architecture — a PA with four channels (IMAP, Gmail, Slack, Telegram), around 500 lines of our own Python code, and zero marginal cost by staying inside the Max subscription.

At Kiwop, it took us 48 hours to decide what to do after Anthropic's announcement. This article tells that decision — why we didn't switch to OpenAI Codex, why we didn't pay Anthropic's "Extra Usage", and how we rebuilt our personal assistant (PA) on top of Claude Code and Model Context Protocol (MCP). An assistant that still runs on the same Claude Max x20 subscription, with the same channels we used before, but with one fundamental difference: the stack is ours, the code is ours, and the provider can't pull the rug out from under us.

This is not an anti-Anthropic manifesto — we still use their models daily and we believe they are the best on the market for an agency in 2026. It is an analytical read on what happens when an agent's economic model depended on a provider's policy, that policy changes, and you have to decide whether to adapt or rebuild.

Table of Contents

- What a PA is and why the word "Personal" matters

- Why we chose OpenClaw as our personal AI assistant in January 2026

- April 4, 2026: what exactly changed

- Claude Code vs OpenAI Codex as the engine for your personal assistant

- Architecture of the personal assistant with Claude Code and MCP servers

- OpenClaw vs PA: the honest comparison

- Alternative to OpenClaw in 2026: when it makes sense to build your own PA

- Frequently asked questions

- Conclusion

What a PA is and why the word "Personal" matters

A Personal Assistant (PA) is an AI assistant that serves a single person by centralizing their email, calendar, messages and documents in a conversational interface. Unlike a multi-tenant SaaS, a PA runs dedicated to a single human with exclusive access to their accounts. That definition, seemingly obvious, is what separates a well-designed PA from a commercial tool in disguise.

The distinction isn't pedantic. A human in 2026 may have three or four email addresses (the work one, the one for a side project, the personal one), two or three WhatsApp numbers, Slack accounts in different workspaces, and half a dozen synchronized calendars. A PA centralizes that entire surface area and turns it into a single conversational interface that understands who you are and what matters to you. Not because it was trained for you, but because it runs for you and only for you, with access to your accounts, your threads, your preferences.

That's the promise of a PA, and it's what made us adopt OpenClaw in January. And it's also what made us decide, in April, to rebuild it ourselves when the provider's economics changed.

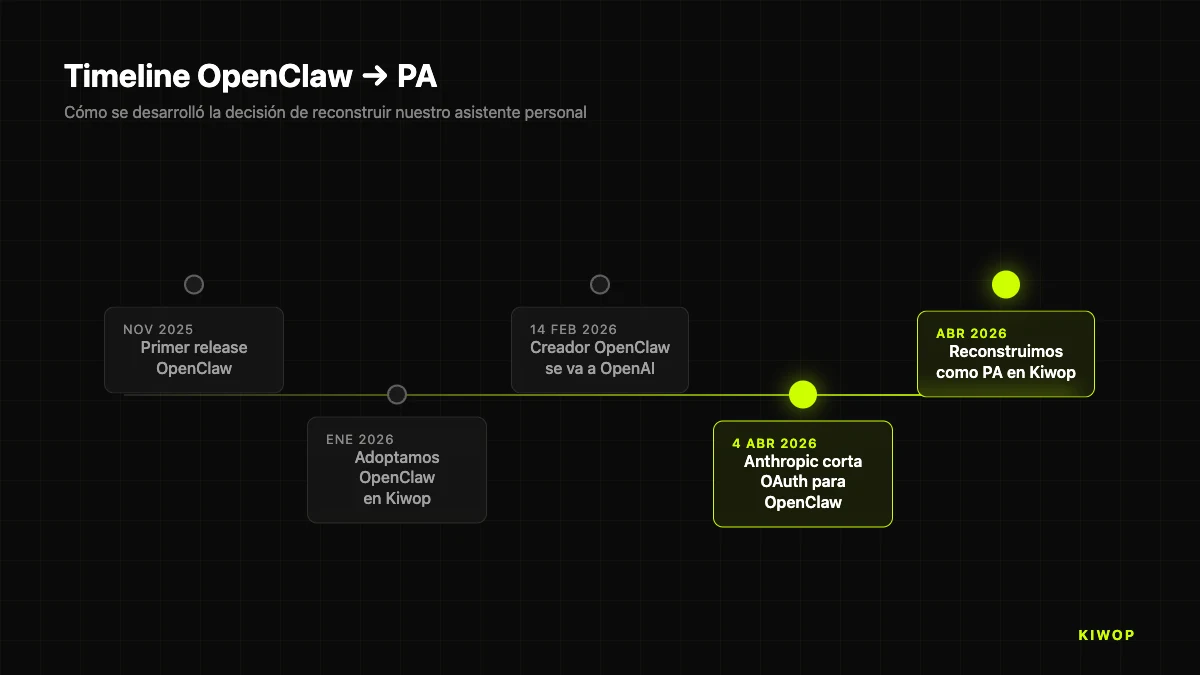

Why we chose OpenClaw as our personal AI assistant in January 2026

OpenClaw is an open source personal AI assistant launched in late 2025 that connects to channels such as WhatsApp, Telegram, Slack and more than twenty messaging platforms using a central Gateway and an extensible skills system. The project runs on your own machine (local, VPS, or fly.io) and, until April 2026, supported OAuth against the Claude Max subscription — which meant that all interactions were counted against the Max x20 plan we were already paying for. The list of supported channels includes Discord, Google Chat, Signal, iMessage, Teams, Matrix, Feishu, LINE, Mattermost, Nextcloud Talk, Nostr, Synology Chat, Tlon, Twitch, Zalo, WeChat and WebChat, among others.

The economic calculation at the end of January was simple. We had a Claude Max x20 subscription (about $200 a month) that we were already using intensively for Claude Code in development. Adding a personal assistant that processed email, read Slack, replied to Telegram messages, and did morning briefings wasn't going to generate any meaningful additional cost, because the subscription limits were more than enough. For $200 a month we had a senior AI developer during the day and a personal assistant in the morning and afternoon.

OpenClaw delivered throughout January, February and March. Every morning at eight, a briefing with my agenda and priority emails landed in Telegram. I could reply to the bot from my phone and my message would go to Claude with full email and calendar context. I could ask from WhatsApp, "do I have anything at five?" and it would answer correctly. It was, to be honest, the most useful product of the season for daily productivity.

But on April 4th everything changed.

April 4, 2026: what exactly changed

That day, Anthropic announced that Claude Max and Claude Pro OAuth tokens would stop working with "third-party harnesses" — an industry term that includes OpenClaw, Crush, Aider, and other clients that until then connected to Claude using the user's subscription credentials. Media coverage was immediate: TechCrunch, VentureBeat, The Register and Hacker News covered the news, with very active threads of users reporting significant cost increases after the change.

Anthropic's official explanation cited "the pressure on our compute and engineering resources, and our desire to reliably serve a broad number of users". The technical and economic read is more interesting.

A Claude Max x20 subscription, technically, is a contract with elastic limits: Anthropic charges you a flat rate and gives you a generous usage cap that most customers don't fully consume. That model works when sessions are launched by a human sitting at the keyboard: usage is intermittent, pauses are frequent, and actual consumption stays well below the ceiling. When you put an autonomous multi-channel agent between the human and the model — as OpenClaw does — consumption climbs in a very predictable way: every incoming message triggers a call, every response triggers another, and 24 hours a day there's traffic even when the human is asleep.

Multiplied by OpenClaw's estimated installed base at global scale, the compute cost for Anthropic was unsustainable within the subscription price. The provider's solution was to separate both worlds: Claude.ai, Claude Code and Claude Cowork remain covered by Max/Pro, but everything that goes through third-party harnesses is now billed through "Extra Usage" at API pricing.

For a user like us, who processed between 300 and 500 daily interactions with the PA (briefings, email, triage, mobile replies), the new projected Extra Usage bill went from "included in the $200 Max" to roughly an additional $800–$1,200 per month. It wasn't affordable. Nor was it justifiable, given that the same work still fit comfortably within the Max plan if we ran it from Claude Code directly.

The question then became: how do we get a multi-channel personal assistant to run inside Claude Code?

Claude Code vs OpenAI Codex as the engine for your personal assistant

The first temptation was to move OpenClaw to another provider. OpenClaw itself supports OAuth against ChatGPT and Codex, and Peter Steinberger — the project's creator — had announced in February that he was joining OpenAI. Switching the assistant to run on a ChatGPT Pro subscription or a Codex plan was technically a matter of changing the authentication profile.

We discarded that route for three reasons.

First, model quality for the tasks that matter to us. As of today, for email triage, Slack thread summarization, and calendar information extraction, Anthropic's models (especially Opus 4.7) consistently gave us better results than GPT-5 on the same prompt. It's not a generic opinion — we evaluated it during March by running the same briefings through both providers and qualitatively comparing the outputs, without a formal publishable methodology but with consistent criteria applied by the same reviewer. The conclusion for our use case (email management in Spanish and Catalan, with agency technical vocabulary) was clear in favor of Anthropic. Your mileage may vary — we recommend doing your own evaluation with your own prompts before choosing a provider.

Second, lock-in with a provider that had just demonstrated unilateral policy changes. If Anthropic could close OAuth to third parties with fifteen days' notice, any other provider could do the same. The lesson wasn't "switch providers", the lesson was "don't tie your critical daily infrastructure to third-party decisions about billing models". Moving OpenClaw to OpenAI was treating the symptom without addressing the cause.

Third, and most important: Claude Code was already in the stack. We used it every day to program with AI in an agency. It had MCP servers connected. It knew how to read our databases, run scripts, deploy to production. If instead of treating the personal assistant as a separate project we treated it as just "another use" of Claude Code — with its own CLAUDE.md, its own MCPs, its own commands — we could reuse the entire infrastructure. And most importantly: that use was still covered by the Max x20 subscription because Claude Code is a first-party Anthropic product, not a third party.

The decision was made in an afternoon. The project name: PA, direct and literal.

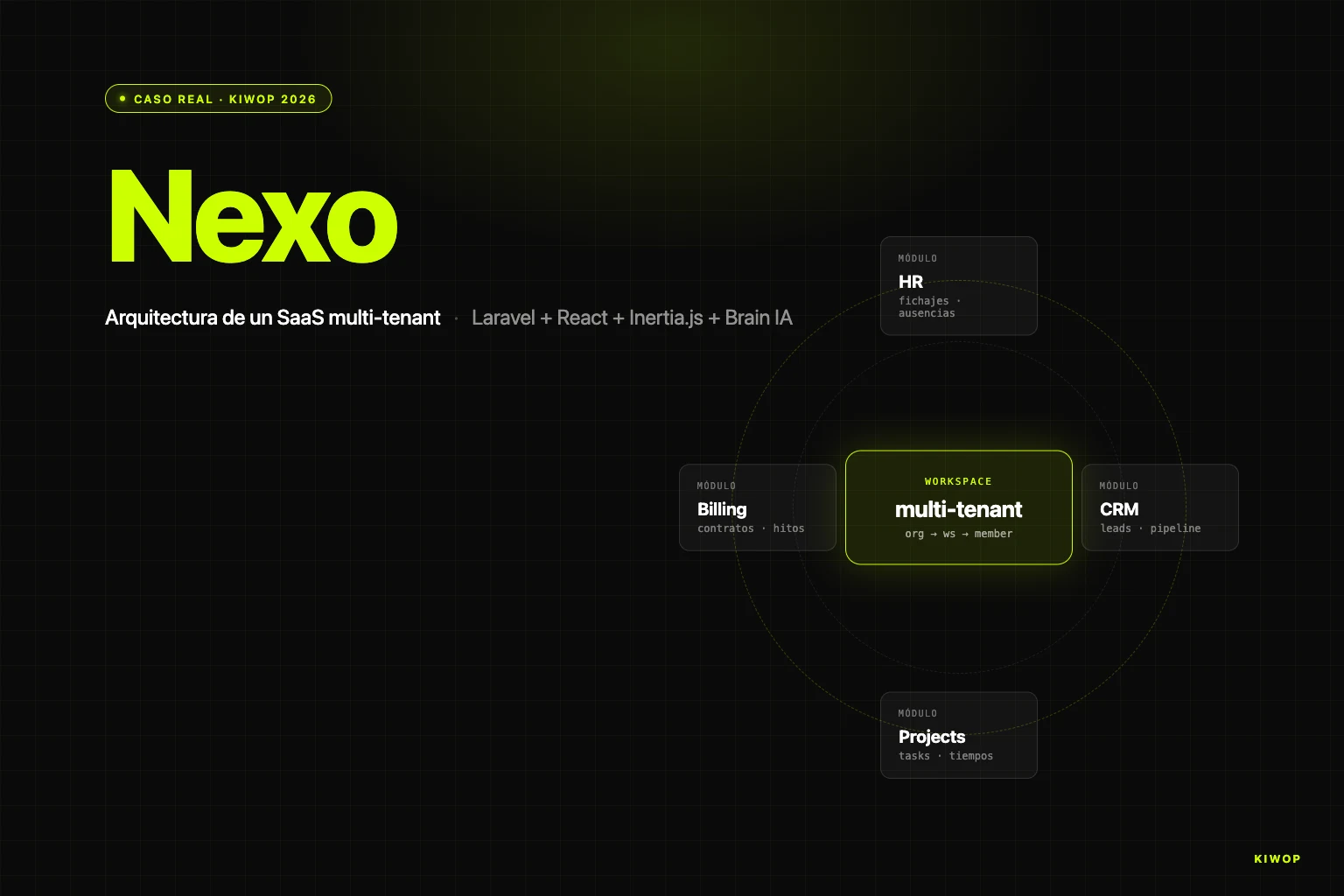

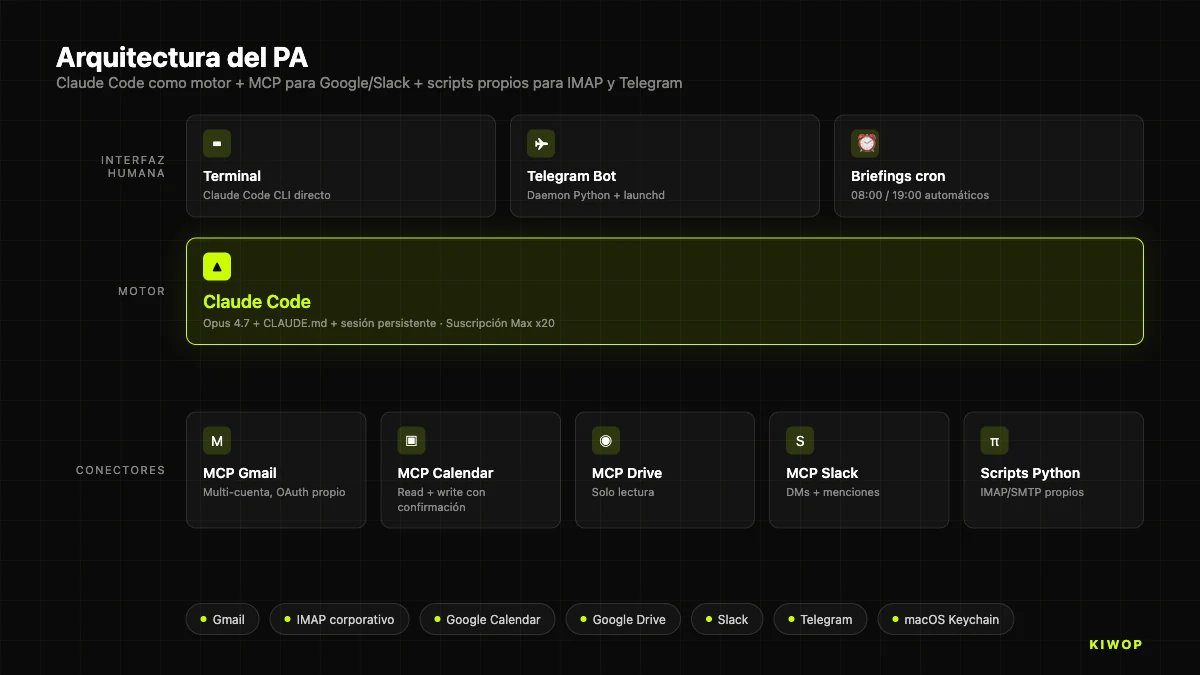

Architecture of the personal assistant with Claude Code and MCP servers

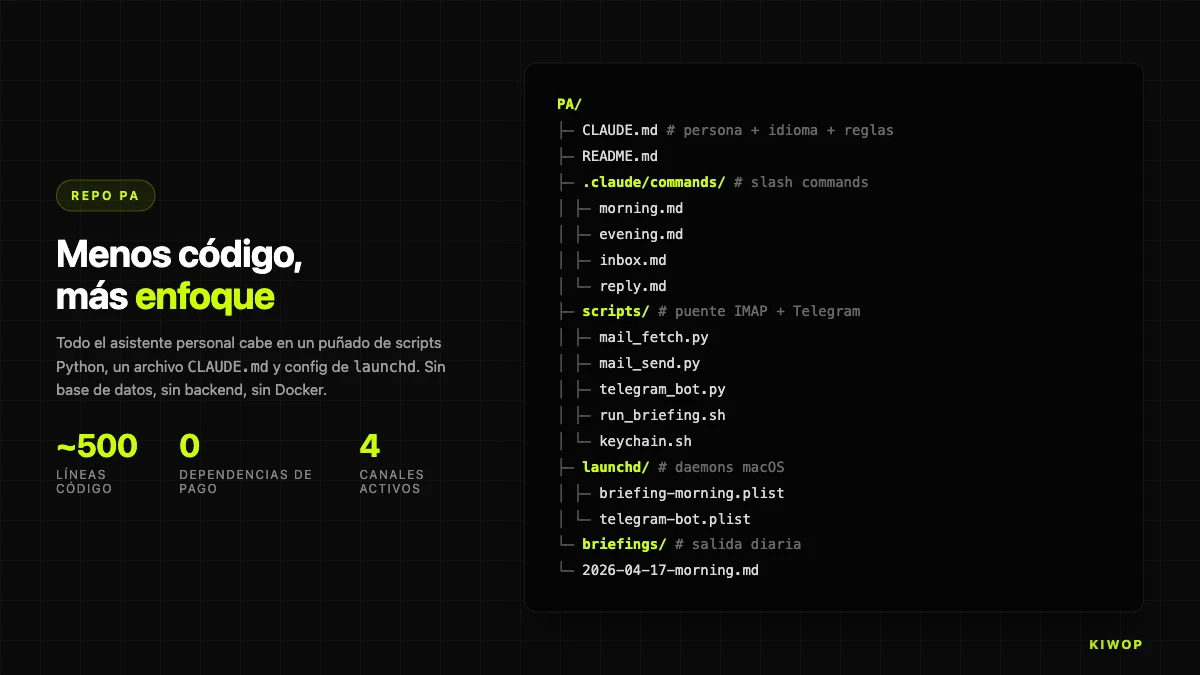

The PA runs in its own directory (~/Dev/PA) with a simple but deliberate structure. It is not a closed application: it is a set of Python scripts, Claude Code configurations and a Telegram bot that talk to each other through the operating system. The philosophy is the opposite of OpenClaw, which integrates everything inside a monolithic Gateway. In PA, each piece does one thing and the glue is Unix.

The foundation: Claude Code as the conversational engine

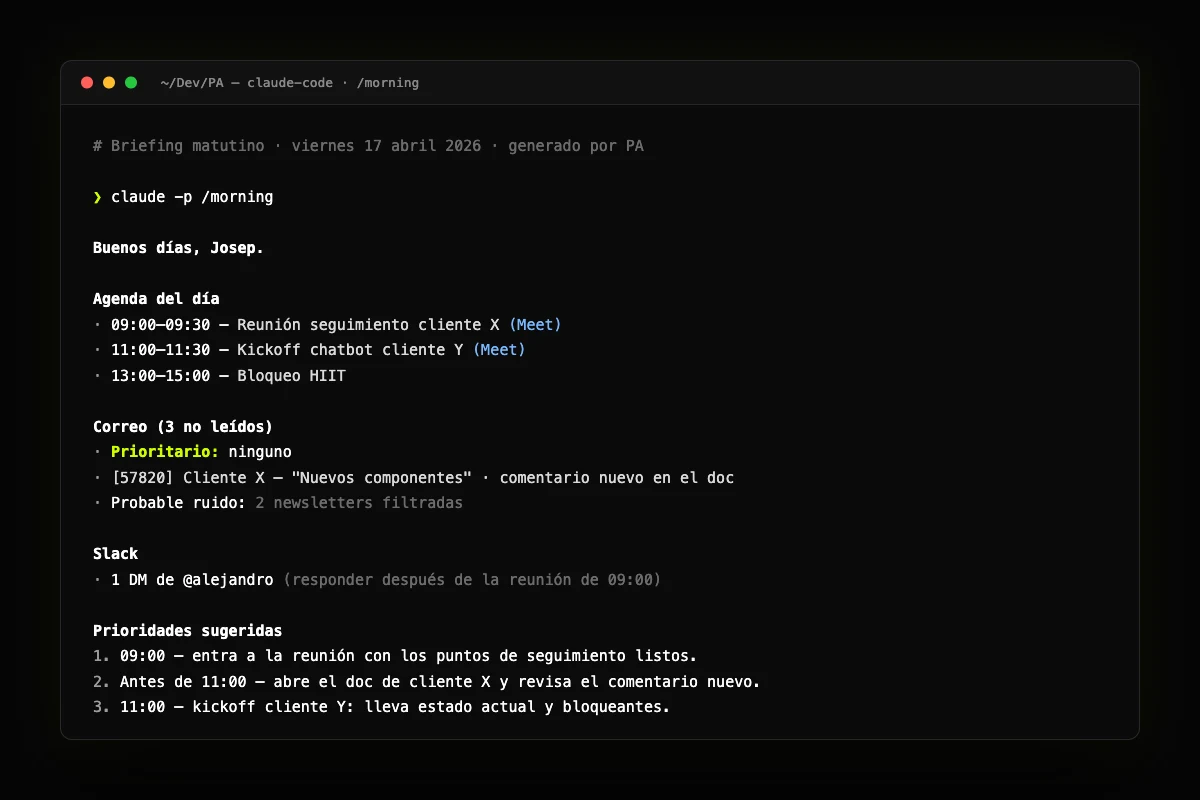

Claude Code is the brain. Not a chatbot, not an API, but the full Anthropic CLI running in "project" mode with a dedicated directory and its own CLAUDE.md file that defines persona, default language (Catalan in our case), and a mandatory confirmation rule before sending emails or creating events. That rule is non-negotiable: the PA never touches anything without asking first.

Every time we interact with the PA, whether via terminal, a scheduled command, or the Telegram bot, what happens underneath is a claude -p session with persistent context. That means the assistant's memory doesn't live in a capricious external store: it lives in Claude Code's own session flow, with the same caching and reinjection guarantees we use when developing.

The connectors: MCP for everything external

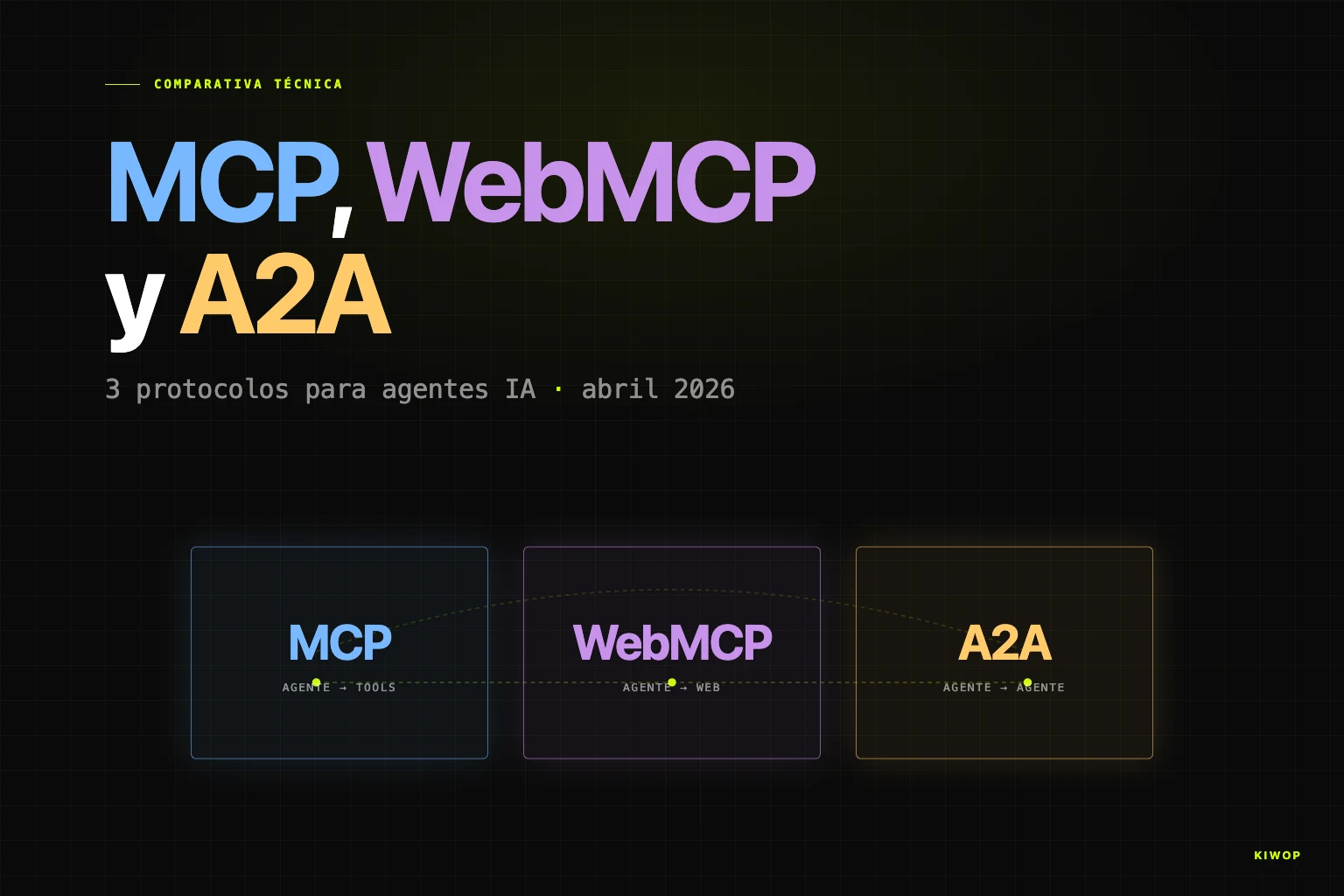

To access the outside world, the PA uses MCP — Model Context Protocol — the open standard Anthropic published in 2024 that has become the common language of AI agents in 2026. If you want to understand what MCP is and why it matters so much, the article WebMCP: your website ready for AI agents goes into the technical detail. MCP is the piece that allows Claude Code to connect to enterprise systems via LLM integration without rewriting custom connectors for each service.

In the case of PA, the active MCP connectors are:

- Gmail MCP: read, draft and send email from the multiple addresses of the same person (the agency work account, the personal account, a side project account). Read always; write only with confirmation.

- Google Calendar MCP: query agenda, create and move events. Also with confirmation.

- Google Drive MCP: on-demand document search and read. Read-only.

- Slack MCP: read DMs and mentions, reply with confirmation.

All these connectors are third-party, actively maintained, and authenticate via each service's own OAuth. In no case do we pass credentials to the PA directly — the MCP servers manage authentication, and we only authorize access from the browser once during setup.

MCP's blind spots: IMAP mail and Telegram

MCP covers the Google + Slack surface, but leaves two important gaps for an assistant that aims to be "personal": corporate email over IMAP (a company's own mail server, not Gmail), and Telegram messages. For these two gaps, we've written custom Python scripts that act as a bridge between the outside world and Claude Code.

The IMAP client is a set of small scripts: mail_fetch.py, mail_read.py, mail_send.py, mail_flag.py. Each one does one thing. They authenticate against the company domain's mailbox by reading credentials from the macOS Keychain (never from files on disk or persistent environment variables). When Claude Code needs to read the corporate email, it invokes these scripts as tools from the session, the same way it would invoke an MCP.

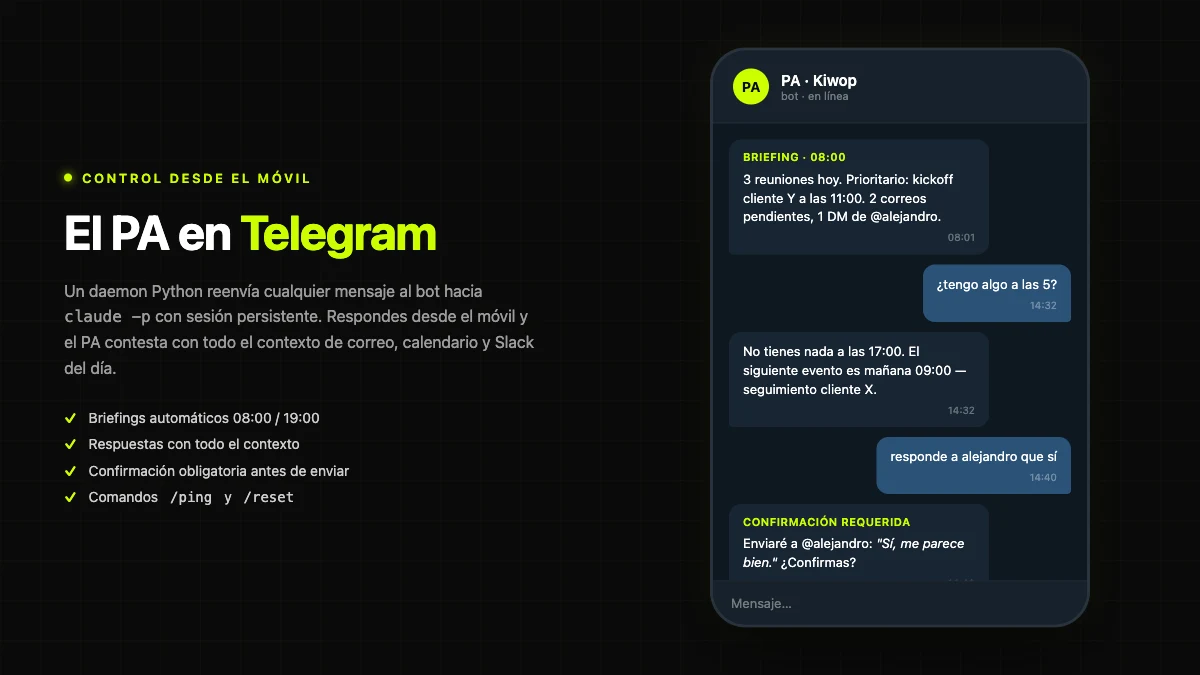

The Telegram bot is the most interesting component of PA. It's a Python daemon managed by launchd (the macOS equivalent of systemd) that starts at login and restarts itself if it fails. When a message arrives at the bot from the phone, the daemon passes it to claude -p with a persistent session, waits for the response, and formats it as Telegram-compatible Markdown before returning it. The result is that you can talk to the PA from your phone as if it were any chatbot, but underneath the response is generated by Claude with full context of your email and calendar for the day.

Scheduled briefings: cron + launchd

Two scheduled briefings close out the system: one at 08:00 ("morning") and another at 19:00 ("evening"). They are triggered by cron, which runs the run_briefing.sh script passing it the briefing type. That script launches a Claude Code session with a specific prompt ("read important unread emails, check today's calendar, summarize priorities") and saves the output to briefings/YYYY-MM-DD-morning.md. If the Telegram bot is active, the same script pushes the briefing to the chat so it lands on the phone before the day begins.

Repo structure

This whole machine lives in about 20 Python files plus a handful of shell scripts. No database, no backend, no Docker. The full source code of the PA is smaller than a typical React component. This is deliberate: the less code, the less maintenance; and the less abstraction, the easier it is to debug when something breaks. This decision is consistent with what we recommend to our clients in LLMOps: managing language models in production — operational complexity kills agents more than model complexity does.

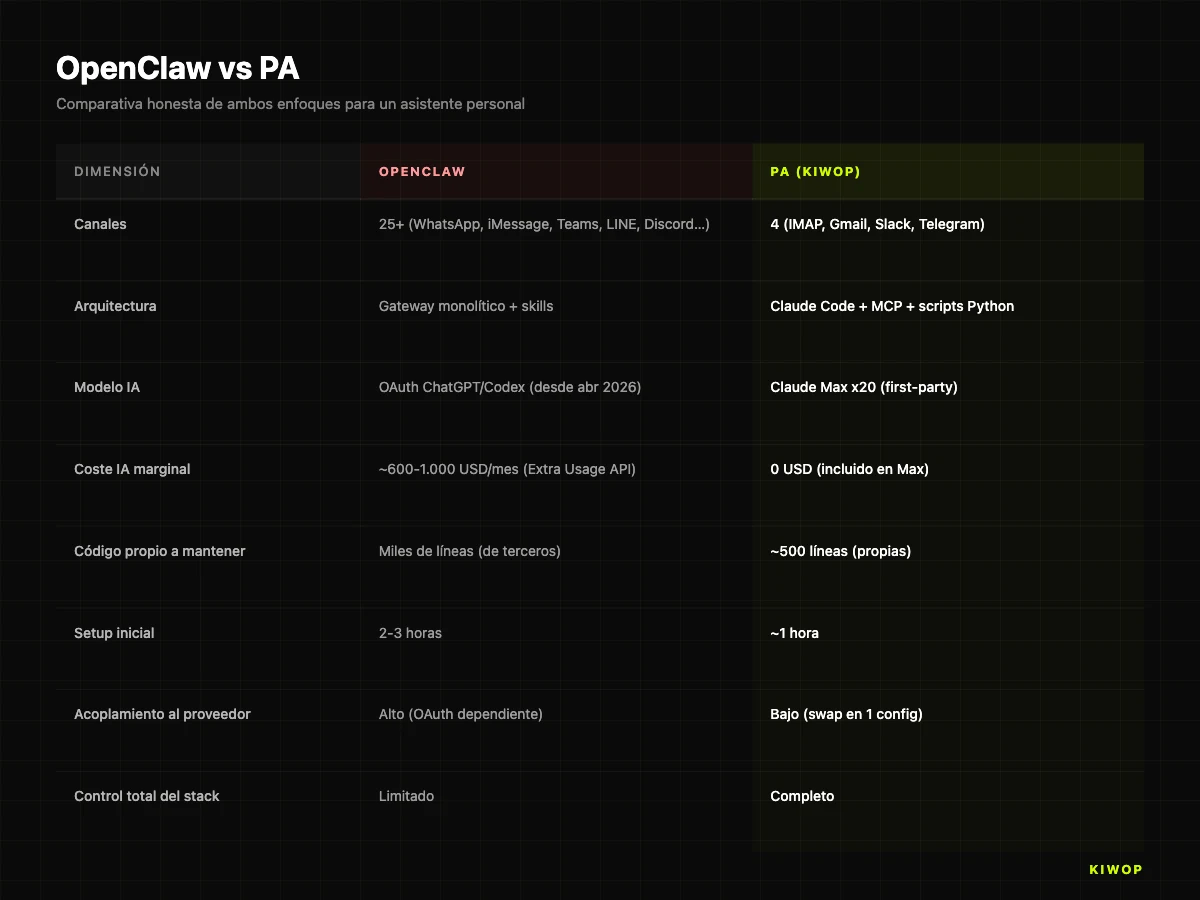

OpenClaw vs PA: the honest comparison

It's not a competition. OpenClaw is still a more complete product, with more channels, an active community, and a clear product roadmap. If someone asks us in 2026 "I want a personal assistant with WeChat, LINE, iMessage and Discord simultaneously", the honest answer is "check out OpenClaw". For the case "I want WhatsApp + Telegram + email + calendar + Slack, running on my Claude Max, with code I can audit and modify", the answer is PA.

The choice between one and the other is fundamentally a choice about how much coupling you want with an external provider. OpenClaw gives you more out-of-the-box capabilities in exchange for depending on Anthropic's or OpenAI's OAuth policies. PA gives you fewer capabilities but with the certainty that as long as you can pay Claude Max and the Gmail service continues to offer MCP, nothing external can leave you without an assistant overnight.

Shall we build yours? We design and develop custom personal AI assistants for professionals and executives. → AI agent development

Alternative to OpenClaw in 2026: when it makes sense to build your own PA

We're increasingly being asked whether we would build custom PAs for clients. The short answer is yes, and we're starting to offer it as part of our AI agent development services. The long answer requires nuance about who it makes sense to build a PA for and for whom it's overkill.

When building a PA makes sense:

- For a professional who already manually processes more than 80 emails a day across multiple accounts, and who spends 30–45 minutes a day on triage.

- For someone who already uses Claude Code in development and has a Max subscription, so the marginal cost of the assistant is effectively zero.

- For roles that demand 24/7 availability (founders, executives, solo lawyers, senior consultants) but can't justify hiring a human PA on cost or discretion grounds.

- For anyone who wants full control over their data: the PA doesn't send emails or calendar data to third-party servers beyond the LLM's own.

When it does NOT make sense:

- For an employee who already works inside Gmail/Outlook and whose company wouldn't authorize connecting an external LLM to the corporate account. The barrier here is political and security-related, not technical — although in that scenario an enterprise RAG project on controlled infrastructure may be the right answer.

- For agencies that want "a PA that manages all their clients" — that is not a PA, that is a multi-tenant SaaS and the architectural approach should be radically different.

- For anyone who doesn't already have a Claude Max subscription or equivalent: the economic calculation changes a lot if you have to pay pay-as-you-go API.

- For anyone expecting an infallible assistant. The PA in its current state can occasionally miss an email in triage, or misinterpret a calendar event. The confirmation rule before acting is precisely there to cover us in those cases.

The broader lesson, beyond whether or not you build your PA, is another: AI agents depend economically on the provider's billing model, and that model can change. Anthropic changed it on April 4th. OpenAI has changed theirs several times since the launch of ChatGPT Pro. Google adjusts theirs regularly. Any agent architecture you want to use for years should anticipate that risk, and the answer isn't technical — it's strategic. Building thin, decoupled layers between your logic and the provider's model is what lets you pivot in 48 hours when the policy changes.

At Kiwop we call this "provider-proof agents". It's not a methodology with a commercial name: it's a way of designing that boils down to three rules. First, the glue between pieces is your own code, never a third-party framework. Second, the prompts and flows are in your files, not in a SaaS. Third, you can change the model with a single configuration file, without touching the rest of the system. PA satisfies all three. If Anthropic closed another door tomorrow, bridging to OpenAI or Google would cost us a few commits and an hour of testing, because all the engineering around it (MCPs, IMAP scripts, Telegram bot, launchd) is model-agnostic.

Frequently asked questions

What is the best alternative to OpenClaw in 2026?

The best alternative depends on the use case. For those who already have a Claude Max subscription, the most economical option is to build your own personal assistant on top of Claude Code + MCP (zero marginal cost). For those who prefer a ready-made product, OpenClaw is still valid by paying Anthropic's "Extra Usage" or switching the OAuth to ChatGPT/Codex. For regulated corporate environments, we recommend evaluating on-premise solutions or an enterprise RAG project on controlled infrastructure.

Can I still use OpenClaw with my Claude Pro or Max subscription?

No. As of April 4, 2026, Claude Pro and Claude Max OAuth tokens stopped working with OpenClaw and other third-party harnesses. OpenClaw still works, but you have to pay for usage separately via Anthropic's Extra Usage (API rates) or via OpenAI's OAuth subscription (ChatGPT/Codex). For customers processing high volumes, the cost jump reported by the community has been significant.

What is MCP (Model Context Protocol)?

MCP is the open standard that Anthropic published in late 2024 so that language models can connect to external tools, databases and APIs in a uniform way. In 2026 it is supported by the main AI clients — including Claude Code, Cursor, Windsurf and others — and the list of available MCP servers (Gmail, Calendar, Drive, Slack, Linear, GitHub, Notion, etc.) grows week by week. The PA uses MCP as the main access path to Gmail, Google Calendar, Google Drive and Slack.

How much does it cost to build your own PA?

If you already have a Claude Max x20 subscription (about $200/month), the marginal cost is zero from the AI model standpoint. The build time, if you do it yourself from scratch, is around 20–30 hours spread over a week. If you outsource it to an agency, it depends on the scope (which channels, which MCP integrations, whether voice is required or not), but for the standard PA scope described in this article we estimate between €4,000 and €7,000 of construction and very low maintenance thereafter.

Why Claude Code and not OpenAI Codex?

Claude Code is a better fit for the personal assistant model for two very concrete reasons. First, the quality of Opus 4.7 on email triage and calendar management tasks was superior to GPT-5 in our internal tests as of March 2026 (qualitative evaluation, not formal publishable methodology). Second, Claude Code is a first-party Anthropic product and remains covered by the Max subscription, whereas using Codex from within our already-built stack would have meant paying for a double subscription (Max for development, ChatGPT Pro for PA) with no clear functional gain.

Is the PA safe for confidential email?

The PA is as safe as the model provider you connect to. In Anthropic's case, processed emails are not used to train models (Anthropic's public policy as of 2026) and communication is encrypted. That said, any PA that passes sensitive content through an external LLM implies an act of trust in that provider. For regulated content (healthcare, legal, financial with professional secrecy), we recommend reviewing the specific terms and considering on-premise alternatives.

Can WhatsApp be integrated?

As of today, WhatsApp does not have an official MCP and unofficial bridges (like the ones OpenClaw integrates) require maintaining a WhatsApp Business API account or less stable techniques. The PA in its current version does not natively integrate WhatsApp — we use Telegram as the primary mobile channel for stability. We are evaluating Meta's WhatsApp Cloud API MCP for a second iteration.

Can the PA act without human supervision?

By design, no. The mandatory confirmation rule before any write action (send email, create event, reply on Slack) is the core of the assistant's safety. Viewing an email, reading the calendar, searching in Drive — those are all read operations and it does them without confirming. But sending, creating, moving, deleting — always require an explicit "yes" from the human. It is a conscious architectural decision: we prefer a slightly less agile PA that does not make irreversible mistakes.

Does this architecture work on Windows or Linux?

Yes, with adjustments. The concepts (Claude Code + MCP + Python scripts + Telegram daemon) are portable. What changes is the process manager at startup: on macOS we use launchd, on Linux it would be systemd with user units, and on Windows the Task Scheduler. The Keychain for IMAP credentials also has equivalents on other platforms (Secret Service on Linux, Credential Manager on Windows).

Conclusion: AI agents and provider risk

Anthropic's policy change on April 4, 2026 did us a favor. It forced us to ask ourselves whether we wanted to keep outsourcing a critical piece of our daily productivity to a third-party project, however brilliant, when we had all the pieces to build it ourselves on a stack we already mastered. The answer, with two weeks of perspective, is clear: we didn't want to. And we don't want to, for any critical layer of our work.

The lesson is not "everyone should build their PA". The lesson is that in 2026, with AI models increasingly capable and with MCP consolidating as the integration standard, the distance between using a third-party agent and building your own has shrunk dramatically. What in 2023 would have required a team of three engineers for three months is today about 500 lines of Python and an afternoon of setup. That torn-down barrier is what defines the current wave of applied AI — and what best explains why software agencies in 2026 are in such a different position from two years ago.

If you're thinking about setting up your own personal assistant, or if you need help building robust AI agents for your team or company, at Kiwop — Digital agency specialized in Software Development and Applied Artificial Intelligence for global clients in Europe and the US — we offer custom AI agent development and artificial intelligence consulting for production contexts. The PA we describe here is the best living example of how we do it for ourselves.