MCP, WebMCP and A2A: Which Protocol to Choose for Your AI Agents

By the Kiwop team · Digital agency specialized in Software Development and Applied Artificial Intelligence · Published on April 19, 2026 · Last updated: April 19, 2026

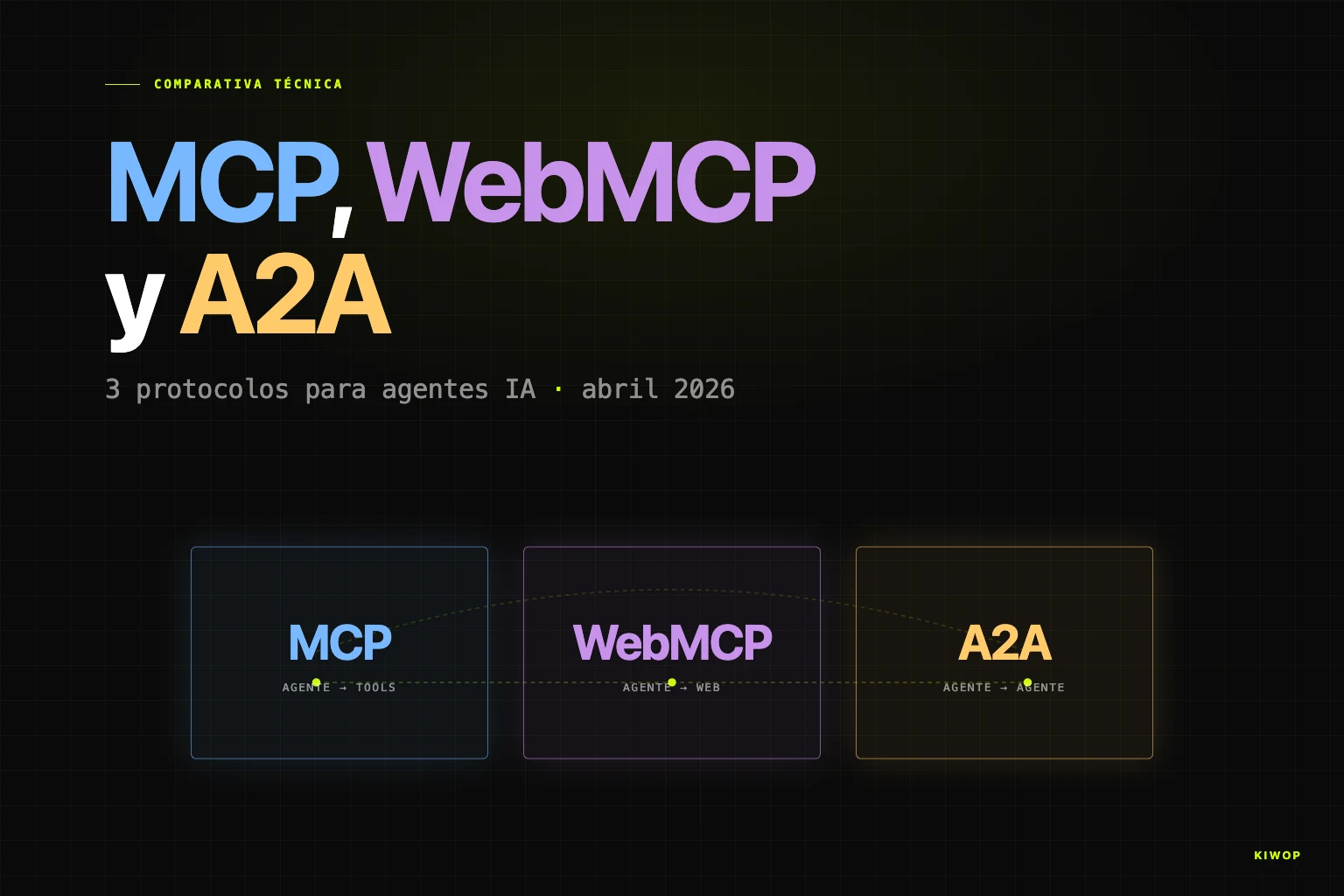

TL;DR — Today three key protocols for AI agents coexist. MCP (Model Context Protocol) connects an agent to its tools and data; it's the de facto standard, donated to the Linux Foundation. WebMCP is the browser variant for websites to expose actions to agents; still emerging. A2A (Agent-to-Agent) connects agents to each other; v1.0 in production with 150+ organizations. Use MCP for tools, A2A to orchestrate and WebMCP for your web.

Every week we get the same question from executive committees and product teams: "We're building an AI agent, which protocol do we use?". The right answer is no longer "MCP, full stop" — it was in 2025, but 2026 has brought a constellation of standards that complement each other more than they compete. What's interesting isn't which one wins: it's which one solves each problem.

This article is the technical comparison that would have saved us weeks of research six months ago. It's based on our real experience building personal assistants with Claude Code and MCP, on internal work on Nexo (our workspace SaaS in development), and on the most recent official sources published by Anthropic, Google, Cloudflare, Vercel, the Linux Foundation and the W3C itself. If you're looking for a binary answer, you won't find it here. If you're looking for decision criteria, keep reading.

Why protocols are the new battleground for AI agents

2023 was the year of the prompt. 2024 was the year of the tool (tool use). 2025 was the year of the agent. And today protocols have become the new axis of architectural decision-making. The reason is simple: when there's a single agent talking to a single API, any ad hoc contract works. When you have dozens of agents, dozens of models from different providers, hundreds of internal and external tools, and clients who want to connect their own agents to yours, chaos eats the product.

The explosion has been brutal. Anthropic reports more than 10,000 active public MCP servers and 97 million monthly SDK downloads between Python and TypeScript according to the official note on MCP's donation to the Linux Foundation. A2A, for its part, went from a Google proposal in April 2025 to having more than 150 organizations and being under the Linux Foundation umbrella by early 2026. On December 9, 2025 the Agentic AI Foundation (AAIF) was formalized under the Linux Foundation, with Anthropic, OpenAI, Google, Microsoft, AWS, Block, Cloudflare and Bloomberg as platinum members — a move covered by TechCrunch that marks the end of the era of proprietary protocols.

The current snapshot is as follows. There are three protocols any technical team needs to understand: MCP, WebMCP and A2A. There's a fourth, ACP (Agent Communication Protocol) from IBM, which conceptually overlaps with A2A and whose adoption to date is considerably lower. And there's a swarm of adjacent initiatives — AGENTS.md, Open Responses, ANP, LLMFeed — orbiting without yet reaching the same critical mass. We'll focus on the three that matter for real architecture decisions.

Fragmentation is a real problem. As noted in the arXiv academic survey on agent interoperability protocols, each protocol solves a different layer of the stack: the problem isn't choosing one, it's understanding which layer each operates in and combining them without creating unnecessary coupling. That's the thesis we're going to develop.

MCP — Model Context Protocol (Anthropic, 2024)

MCP is, as of April 2026, the de facto standard for connecting an AI agent with external tools. It was published by Anthropic in November 2024 as an open protocol, and in its first year it traveled the road other standards have taken a decade to cover: from an individual proposal to shared industry infrastructure.

What MCP is conceptually

MCP defines a contract between two parties: an MCP client (generally the agent or model host) and an MCP server (generally an adapter to an external service: Gmail, GitHub, a database, an internal system). The client discovers what tools the server offers, reads its schema, and invokes them with typed parameters. Communication uses JSON-RPC 2.0 over a transport.

The protocol doesn't only cover tools: it also defines resources (content statically readable, like files or records), prompts (reusable templates) and — since the November 25, 2025 specification — asynchronous operations, stateless servers, server identity and official extensions per the official specification. This evolution is what enabled MCP to go from "connecting a local client" to "powering agent infrastructure in production".

Transports: stdio and Streamable HTTP

MCP supports two official transports according to the transports documentation of the spec:

- stdio: the client starts the server as a local subprocess and communicates via standard input/output. Ideal for local runs, CLI and personal assistants.

- Streamable HTTP: the server lives as an independent process and exposes a single HTTP endpoint accepting POST and GET. It can use Server-Sent Events for streaming. It's the de facto standard for remote servers and replaces the old HTTP+SSE transport.

Quick rule: stdio for personal assistants on one machine, Streamable HTTP for shared services in production.

Available servers and ecosystem

Currently, the MCP ecosystem covers virtually any relevant enterprise service: Gmail, Google Calendar, Google Drive, Slack, Linear, GitHub, Notion, Jira, Confluence, Salesforce, HubSpot, Stripe, PostgreSQL, Snowflake, BigQuery and dozens more. Adoption crosses provider boundaries: OpenAI supported it natively in ChatGPT in March 2025, Microsoft integrated it in Copilot, Google supports it in Gemini, and editors like Cursor, Windsurf and VS Code speak MCP natively.

Optimal use cases

MCP shines when there's an agent that needs access to many heterogeneous sources: a personal assistant reading your email and calendar, a development copilot querying your code and your tickets, a sales agent crossing CRM and billing data. In all those cases, the pattern is the same: the agent invokes typed tools on servers that talk to specific systems.

Limitations

MCP isn't the right tool when traffic is agent↔agent (that's where A2A comes in), when the consumer is a browser and not a process with pre-installed OAuth credentials (that's where WebMCP comes in), or when the operation requires massive binary streams or submillisecond latencies (that's where specific APIs come in). And although adoption is huge, security remains an active area of work: there's growing literature on tool poisoning, prompt injection via tool descriptions, and permissions difficult to audit. Any MCP agent in production needs a sandboxing and confirmation layer that the protocol doesn't impose by itself.

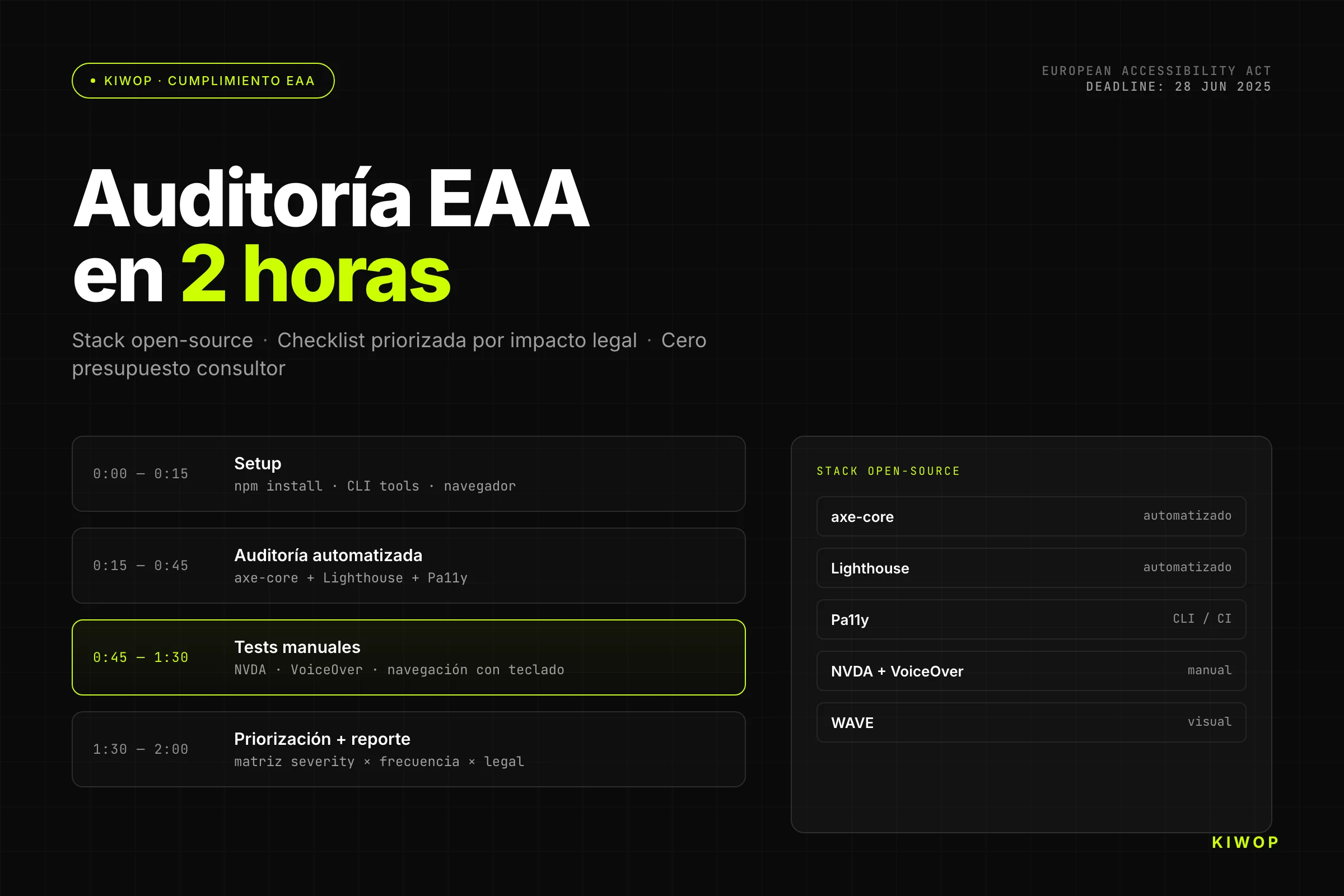

WebMCP — MCP for the web

WebMCP is Google and Microsoft's bet on taking MCP philosophy to the browser and to the web itself. It's not an extension of MCP: it's a parallel standard, coordinated within the W3C Web Machine Learning Community Group, that adapts the client-server contract to the browser's specific environment. We already covered the initial proposal in WebMCP: your website ready for AI agents; here we focus on how it positions itself against MCP and A2A.

Why WebMCP and not simply MCP over HTTP

A priori it sounds redundant: if MCP already has Streamable HTTP transport, why another standard? The WebMCP ecosystem is still in early-preview, but the reason it exists goes beyond immaturity. The technical answer is that the browser is a radically different environment from a server. In the browser:

- Authentication is managed by the user, not the agent (the session cookie lives in the browser, it doesn't travel with the agent).

- Actions have to be visible and supervisable by the human (there's no room for opaque automations).

- The exposed surface is that of the visited website, not a dedicated endpoint.

- Discoverability happens when the user navigates to the page, not through a global registry.

WebMCP solves exactly those differences. According to the official Cloudflare Browser Run documentation on WebMCP, the website exposes a set of tools — for example searchFlights() or bookTicket() — with typed parameters. An agent navigating the page can discover those tools, read their schemas and call them directly without simulating clicks or scraping the DOM.

Implementation: Cloudflare and Vercel

The two providers that have moved fastest are Cloudflare and Vercel, although with different approaches.

- Cloudflare added WebMCP support to its Browser Run product (previously Browser Rendering) on April 15, 2026, according to its official changelog. The integration lets an AI agent connect its MCP client against Browser Run via the CDP endpoint and discover tools exposed by any site that implements WebMCP — even when that site doesn't control the infrastructure.

- Vercel has oriented its product toward deploying MCP servers on its Functions platform, leveraging Fluid Compute to optimize the irregular usage patterns typical of agents. The official Vercel MCP documentation describes how to set up Streamable HTTP endpoints with built-in OAuth, and its AI SDK includes an experimental MCP client. Although Vercel is more classic MCP than pure WebMCP, it's the reference provider when your backend already lives on Next.js.

Optimal use cases

WebMCP is the right answer when your product is a public website and you want AI agents to use it well: e-commerce (search, filters, cart, checkout), SaaS with complex forms (configurators, quotes), marketplaces, booking systems. Every case where a human user would use buttons and forms and you want an agent to do the same with less friction.

Current adoption status

Here we have to be honest: WebMCP is emerging, not mature. Chrome supports it experimentally in Canary, Cloudflare has partial production, and the AB Magency coverage of the "agentic format war" notes that WebMCP competes simultaneously with proposals like Cloudflare's Markdown for Agents (released February 12, 2026). Both coexist; neither has won. For a team that needs to decide today, WebMCP is a reasonable future bet for sites with predictable agent traffic, but it's not a standard to bet the entire architecture on.

A2A — Agent-to-Agent protocol

If MCP connects an agent to its tools, A2A connects agents to each other and, as of April 2026, is the horizontal standard of reference. It's the difference between "a worker uses their tools" and "a worker delegates a task to another worker". It's not an academic distinction: it solves real problems that MCP cannot solve.

Origin and current status

A2A was announced by Google at Google Cloud Next 2025 on April 9, 2025, with more than 50 launch partners (Accenture, Atlassian, Box, Cohere, Deloitte, LangChain, MongoDB, PayPal, Salesforce, SAP, ServiceNow, UiPath, among others). In June 2025 Google donated it to the Linux Foundation. In March 2026 A2A v1.0 was published, the version the community considers fit for production. The official documentation at a2a-protocol.org describes the model in detail; the upgrade announcement on the Google Cloud Blog documents the v1.0 improvements.

Architecture: Agent Cards and discovery

The central concept of A2A is the Agent Card: a JSON document that describes what an agent can do, what skills it exposes, what authentication it requires and where to contact it. Agents discover each other by reading other agents' Agent Cards, just like web services are discovered by OpenAPI. In v1.0 Agent Cards carry cryptographic signature (Signed Agent Cards), which allows verifying that a card was issued by the agent's owning domain — an important improvement against impersonation.

Transport is JSON-RPC 2.0 over HTTP, Server-Sent Events, or gRPC, with authentication via API keys, HTTP auth, OAuth 2.0/OIDC and mutual TLS. That is: the same pieces any enterprise backend has been using for a decade, without reinventing anything.

Optimal use cases

A2A is the right answer when there are several specialized agents that need to collaborate: an orchestrator agent that delegates "find a flight" to a travel agent, "book a hotel" to a hotel agent, and "pay" to a payments agent. Each specialized agent has its own tools (MCP internally) and offers its Agent Card externally to be orchestrated. Typical scenarios: multi-provider purchasing concierges, multi-department workflows in large enterprises, agent marketplaces.

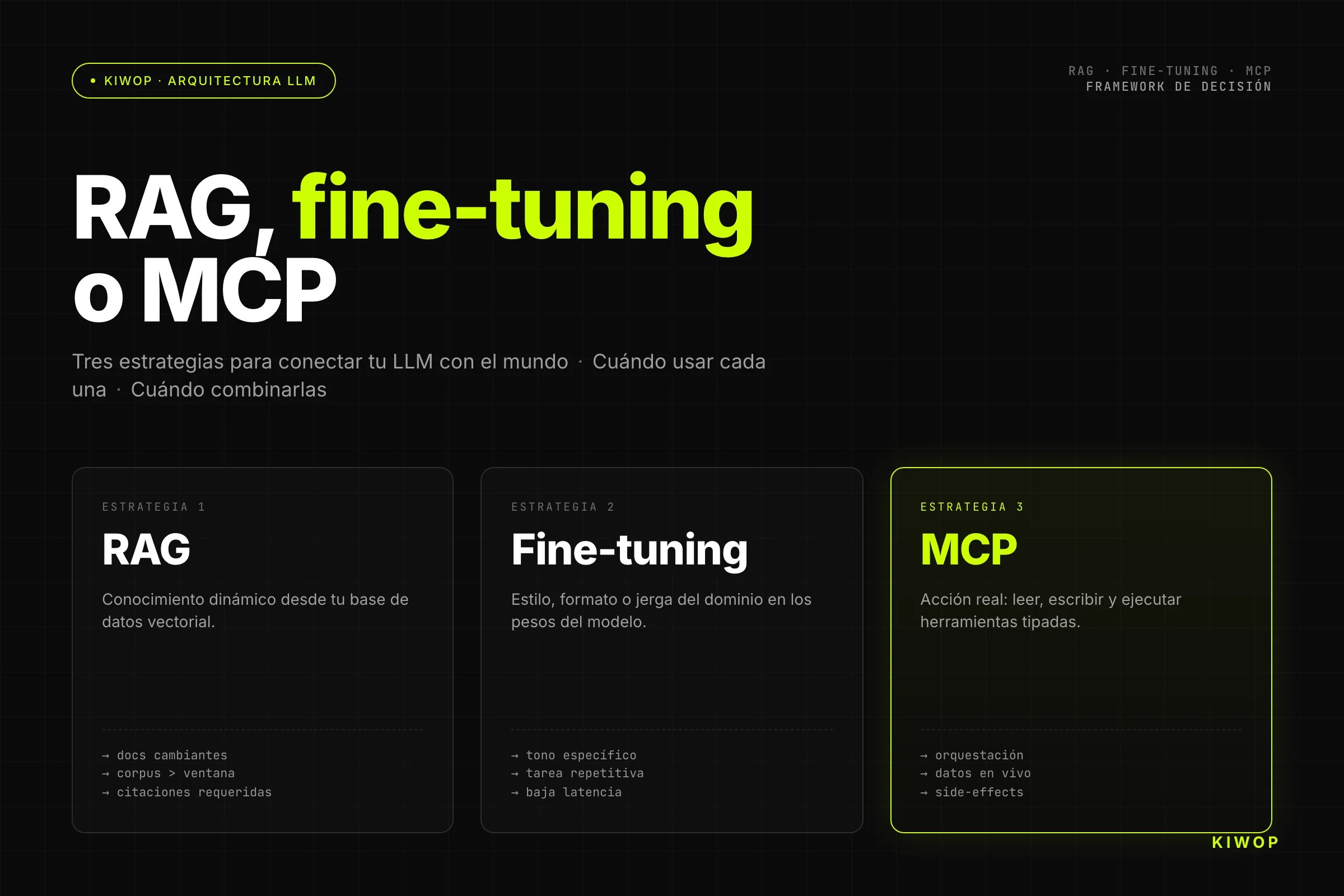

Fundamental difference from MCP

The key, well summarized by IBM in their article on A2A, is that MCP is vertical (agent↔tool) and A2A is horizontal (agent↔agent). Both are complementary. An A2A agent can internally use MCP to access its data. An MCP agent may never need A2A if it's a single agent. The decision isn't "MCP or A2A"; it's "I need one, the other, or both".

Limitations and maturity

A2A v1.0 is recent. Although there are production deployments at Microsoft, AWS, Salesforce, SAP and ServiceNow according to Stellagent's coverage, the ecosystem of interoperable agents is still small compared to the MCP tools ecosystem. Building with A2A today means building many of the pieces that MCP already has mature: libraries, gateways, debuggers, observability. If your problem is "an agent with many tools", A2A adds complexity without solving anything new.

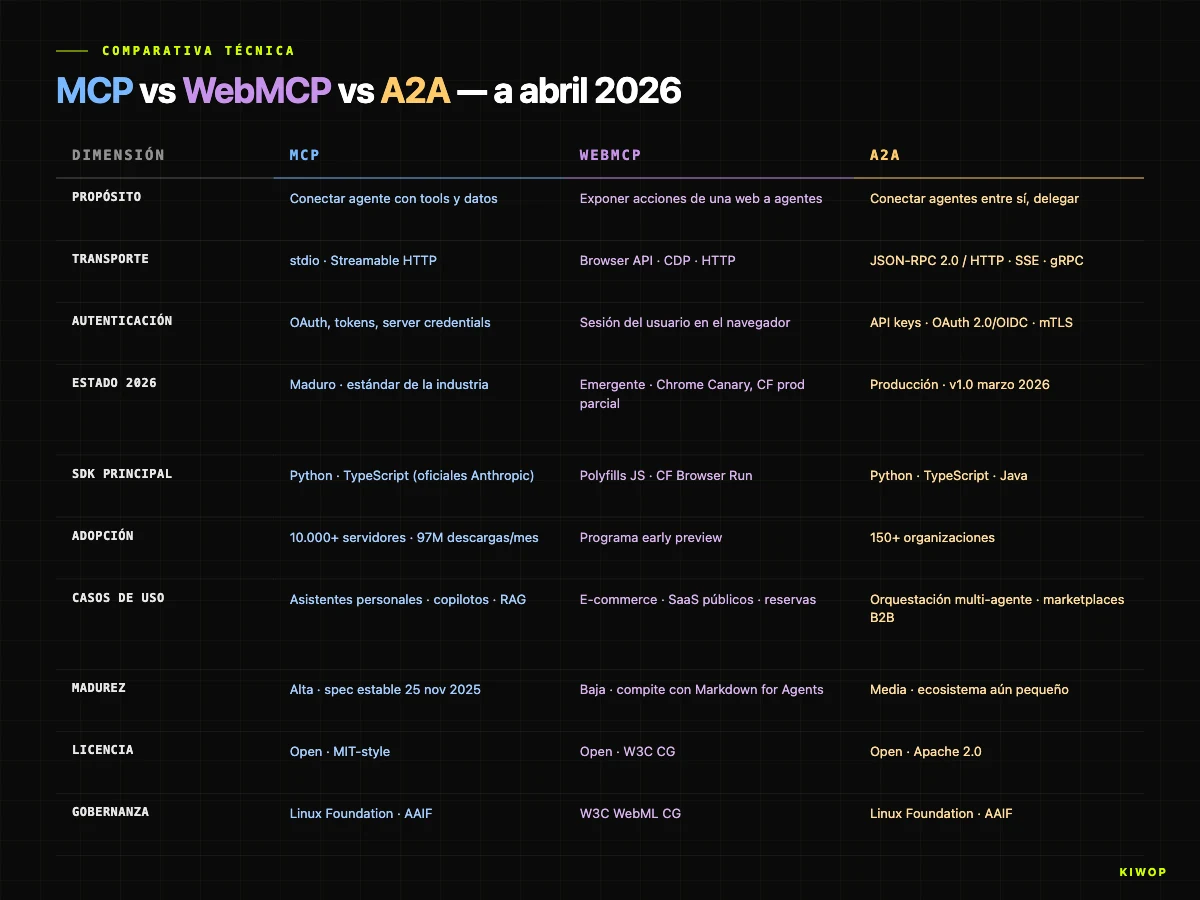

Comparison table: MCP vs WebMCP vs A2A

The table is dense on purpose. If we had to summarize it in one sentence: MCP rules inside the agent, A2A rules between agents, WebMCP rules in the browser. Any architectural decision starts by identifying where you are and working from there.

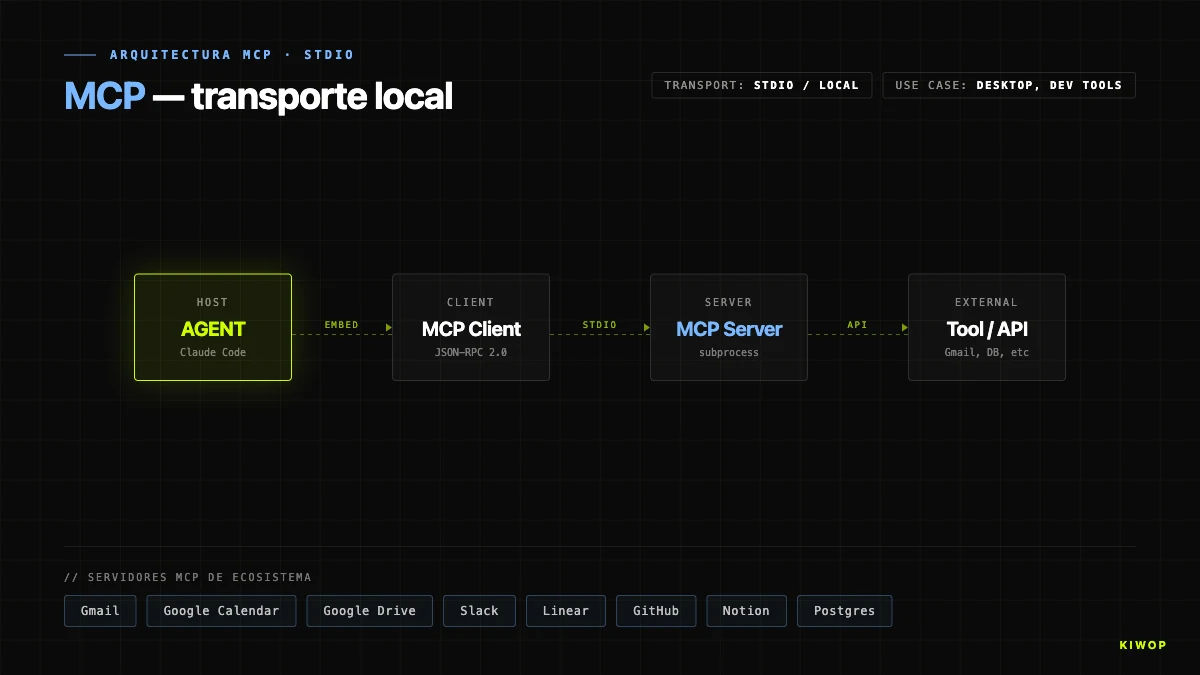

Real example 1: Kiwop's PA uses MCP stdio

Our PA (Personal Assistant) — the personal AI assistant we built at Kiwop after Anthropic's OAuth cut on April 4, 2026, documented in detail in from OpenClaw to a PA with Claude Code and MCP — is a textbook case of optimal MCP stdio use.

The architecture in one sentence

Claude Code runs on a local machine (macOS, with launchd as process manager). It needs access to Gmail, Google Calendar, Google Drive and Slack. For each of those four services, we have a local MCP server launched as a subprocess of the session. The MCP client embedded in Claude Code launches the servers via stdio, discovers their tools, and invokes them when needed.

Why stdio and not Streamable HTTP

The temptation was to deploy the MCP servers on an HTTP endpoint and have Claude Code connect over the network. We discarded it for three reasons:

- Credentials: the OAuth tokens for Gmail, Calendar, Drive and Slack live in the macOS Keychain. Exposing those tokens over the network would involve tunneling, rotation, and a secrets model we didn't want.

- Latency: stdio is a local process with a UNIX pipe. Each invocation is submillisecond. An HTTP endpoint adds network time without functional gain.

- Operational simplicity: the day something breaks, inspecting a local process is infinitely easier than debugging a remote service.

The decision fits the general logic we describe in LLMOps: managing language models in production — keeping close what can be kept close reduces failure surface. That's the philosophy we'll also reflect in the upcoming post on patterns and antipatterns of AI agents in production.

What we have NOT touched

Neither WebMCP nor A2A. The PA is a single agent (Claude Code) with many tools, operated by a single person. Introducing A2A would be pure over-engineering: there's no other agent to coordinate with. Introducing WebMCP doesn't apply: there's no browser in the loop, and the MCP services already cover everything we need.

This is important: a good design is as valuable for what it leaves out as for what it includes. Every protocol added is code to maintain, attack surface, and dependency on provider policies.

Real example 2: Nexo (our SaaS) plans WebMCP for clients

Nexo is the internal SaaS we're building at Kiwop — a collaborative workspace with integrated agent capabilities. Without getting into product details (it'll launch officially later), we can talk about the architectural decision on protocols, because it perfectly illustrates the dilemma most SaaS will face in the next 12–18 months.

The problem: clients who want to connect their own agents

The beta clients asked us for two very different things:

- "We want our own agents (Claude, GPT, the client's internal agents) to access their workspace data in Nexo."

- "We want your internal agents in Nexo to collaborate with the agents our company already has."

Those are two completely different protocol problems.

Decision: WebMCP (and remote MCP) for the first, A2A for the second

For the first problem (clients connecting external agents to their data in Nexo) we decided to expose a Streamable HTTP MCP server per tenant and, in parallel, prepare WebMCP endpoints so that when users navigate Nexo's UI from a browser with an agent, that agent can discover the available actions without scraping the DOM. The tools exposed are the same in both transports; who consumes them and how they authenticate changes. It fits what we already recommend to clients in our LLM integration service.

For the second problem (orchestration of client agents with our agents) we're betting on A2A. We'll publish signed Agent Cards for each internal capability (classify-document, summarize-meeting, draft-email), the client will be able to discover them from their orchestrator agent, and traffic will be JSON-RPC over HTTP with OAuth 2.0. The key advantage of A2A versus "I'll make another REST API" is that the client doesn't have to learn our API: their agent discovers, reads the card, and operates.

What we have NOT chosen and why

We discarded building everything on pure MCP even though it's technically possible. The reason is that MCP is optimized for agent↔tool: if a client wants to delegate to Nexo with their own agent and obtain complex results (multi-step, with intermediate states, with exposed reasoning), A2A fits better. MCP isn't designed for agent↔agent conversations with shared memory.

We also discarded starting with A2A for everything, since for the case "client who wants to read/write workspace data with Claude" an MCP server is the short and standard route, with the largest client ecosystem behind it.

The broader lesson, for any SaaS considering how to open up to agents: it's not a binary decision, it's a decision per use case. Exposing data to a single client agent → remote MCP. Exposing a public web to agents that visit → WebMCP. Interoperating with client agents as peers → A2A.

When NOT to use MCP (or WebMCP or A2A)

A chapter missing in many articles. Agent protocols are very good in their domain and very bad outside of it. Some signs that you should NOT put MCP, WebMCP or A2A in your architecture:

- Extreme latency. If the operation requires submillisecond response (high-frequency trading, industrial control, video games), the overhead of JSON-RPC, the handshake, the tool description and the model round-trip kills any agent protocol. Use specific binary APIs.

- Massive binary streams. Video transcoding, image processing at volume, real-time audio streaming. MCP can invoke the job, but it's not the channel through which the bytes travel. Keep your specific pipelines.

- Long batch processes without an agent. If your case is "cron job processes a CSV every night", an AI agent with MCP is artillery against sparrows. A script and a scheduler are still the right answer.

- Strict determinism. Banking operations, critical transactions, audit logs. An agent with tools can be in the loop, but the transaction engine must be deterministic, with documented retries and no margin for "the model chose another tool".

- Regulated compliance with exhaustive audit. If every call must leave a legally reproducible trace, you need a layer below the agent protocols — not above — to guarantee that record. It can be done, but adding an AI agent doesn't simplify compliance; it complicates it.

Related: in regulated sectors (healthcare, legal, financial), any AI agent architecture requires going through a phase of artificial intelligence consulting to understand what's allowed before choosing protocol. Choosing a protocol without going through that is the classic antipattern.

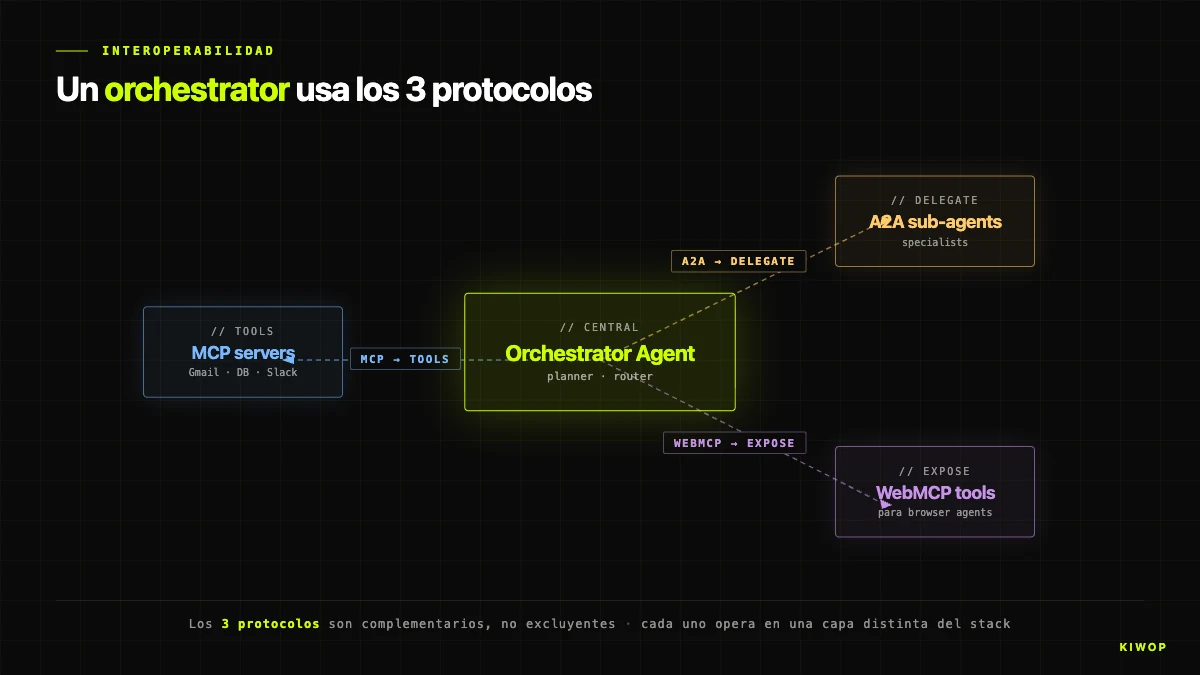

Interoperability: can you combine all three?

Yes, and in fact you likely will. The cleanest pattern combines the three protocols in a coherent stack.

The conceptual diagram:

- An orchestrator agent (highest level, speaks A2A outward).

- When it needs to use an internal tool (read a database, call an enterprise API) → MCP.

- When it needs to delegate a complex subtask to another specialized agent → A2A toward that agent.

- When it needs to act on an external website that doesn't expose its own API but does expose WebMCP → WebMCP client via browser.

The receiving A2A agent, in turn, internally uses MCP for its own tools. And if the receiving agent is a public SaaS with a frontend, it probably also exposes a WebMCP surface for human users with agents. The system is recursive and composable: each agent is a client of all three protocols and (potentially) a server of two (MCP and A2A).

This composition is exactly what sources like the Digital Applied protocol map or the WorkOS guide on MCP/ACP/A2A describe: protocols don't compete because they solve different layers. They compete with their alternatives in the same layer (WebMCP vs Markdown for Agents; A2A vs ACP), not with each other.

A nuance: avoid over-orchestration

Before putting three protocols in a system, ask yourself whether you need them. Most AI applications in production today need only MCP. A big minority need MCP + WebMCP (SaaS with public frontend). A small but growing minority need MCP + A2A (multi-agent ecosystems). The triple case is real but rare. If you can't justify why each layer solves a different problem, one of them is probably unnecessary.

Table: when to use each one

Table: adoption maturity

Roadmap and adoption

What's at each stage according to current official sources:

- MCP: stable specification from November 25, 2025 with async operations and stateless servers; next relevant milestone, the MCP Dev Summit Europe announced for September 17–18, 2026 in Amsterdam. Signals to watch: consolidation of Streamable HTTP transport as the remote default, progressive retirement of legacy SSE, standardization of security patterns for tool poisoning.

- A2A: v1.0 published in March 2026 with Signed Agent Cards and formal AP2 extension. Signals to watch: growth of the public Agent Card registry, appearance of A2A gateways (equivalent to traditional API gateways), native integration with major orchestrators (LangGraph, CrewAI, AutoGen).

- WebMCP: early preview program open, partial implementation in Cloudflare, limited support in Chrome Canary. Signals to watch: acceptance of the W3C draft, Chromium Stable decision on default support, definitive positioning against Markdown for Agents.

- Adjacent standards: AGENTS.md (proposed by OpenAI, under AAIF), Open Responses (OpenAI's open specification for interoperable agent loops, reported by InfoQ in February 2026), and Skills (contributed by Block/Anthropic). None compete directly with MCP/WebMCP/A2A, they solve complementary pieces: repo description, loop interoperability, prompt bundling.

The least risky prediction for the next 6–12 months is that MCP consolidates as a commodity, A2A hits its first big wave of B2B deployments, and WebMCP remains contested until Chrome Stable decides whether or not to support it. If it decides not to, the proposal may die or migrate to an alternative solution (Markdown for Agents or another).

Frequently asked questions

Is MCP only from Anthropic?

Not since December 2025. Anthropic donated MCP to the newly created Agentic AI Foundation under the Linux Foundation, with Anthropic, OpenAI, Google, Microsoft, AWS, Block, Cloudflare and Bloomberg as platinum members. Today MCP is community-governed. Anthropic continues to contribute heavily to development but is no longer the sole decision-maker.

Does WebMCP replace my REST API?

No. WebMCP exposes a surface designed for agents operating from browsers. Your REST API remains the right route for backend-backend integrations, mobile apps, and clients authenticated with their own credentials. WebMCP adds a layer oriented to the user's agent, it doesn't replace it.

Is A2A ready for production?

Yes for standard cases. The v1.0 published in March 2026 includes Signed Agent Cards and is considered fit for production. There are deployments at Microsoft, AWS, Salesforce, SAP and ServiceNow. However, the ecosystem of libraries and tooling is less mature than MCP's — expect to dedicate more of your own engineering to gateways, observability and Agent Card management.

Which SDK to use to start with MCP?

The official SDKs in Python and TypeScript cover most cases. To build servers in the Anthropic ecosystem, the official TypeScript SDK is the recommended route. For scripting and local servers, the Python SDK is the standard. Both are maintained by Anthropic with official support.

Can I use MCP with models that aren't from Anthropic?

Yes. Since March 2025, ChatGPT supports MCP natively; Copilot and Gemini too. MCP servers are model-agnostic: any client that speaks the protocol can consume them. That's the main reason MCP won — portability across providers is real, not marketing.

Is WebMCP the same as Cloudflare's Markdown for Agents?

No. They are two competing proposals with different philosophies. WebMCP exposes typed actions the agent invokes; Markdown for Agents exposes a structured markdown representation of the site for the agent to interpret. Both currently coexist and neither has won. If you want to cover both flanks, Cloudflare documents how to implement parallel support.

What's the real difference between A2A and ACP?

Conceptually they solve the same problem: horizontal communication between agents. A2A is pushed by Google and has much more traction (150+ organizations, Linux Foundation, v1.0 production). ACP is pushed by IBM (BeeAI) with more limited adoption. Technically ACP uses more direct HTTP conventions; A2A uses JSON-RPC with Signed Agent Cards. If you're starting from scratch today, A2A has a better value/risk ratio thanks to the ecosystem.

Do I need all three protocols in my project?

Almost never. Most projects start with only MCP. A SaaS with public frontend adds WebMCP when agent traffic justifies the investment. An ecosystem with multiple interoperating agents adds A2A. The common mistake is putting all three in from day one with no clear business case; the healthy pattern is to start with MCP and add the rest only when there's a real problem to solve.

Conclusion: choose by layer, not by brand

The right question isn't "which protocol is better?" but "which layer am I solving?". MCP rules inside the agent, in the agent↔tool link. A2A rules between agents, in the horizontal link. WebMCP rules at the border between your public web and the agents visiting it. They're three different layers; they don't compete, they compose.

The operational lesson, after building the PA on MCP stdio and designing Nexo on remote MCP + A2A + WebMCP, is that the cost of choosing a protocol wrong is huge. Each protocol brings a mental model, a set of libraries, a client adoption curve and a long-term maintenance commitment. But it's also true — and perhaps this is most important — that portability between layers has never been greater. A well-designed MCP server is consumed from Claude, ChatGPT, Copilot, Gemini and Cursor without changes. A well-designed A2A agent is visible to any orchestrator speaking the protocol. A site with WebMCP responds to any agent visiting it. The investment in open protocols pays off quickly.

At Kiwop — Digital agency specialized in Software Development and Applied Artificial Intelligence for global clients in Europe and the US — we've been building on LLMs in production for two years and on MCP since early 2025. If your team is deciding today how to open your platform to AI agents or how to orchestrate a multi-agent system, we can help you choose the right architecture without over-engineering. Take a look at our services for AI agent development, artificial intelligence consulting and LLM integration — or write to us and we'll sit down.

And if you've found the technical journey interesting, follow the thread with the other posts in the cluster: from OpenClaw to a PA with Claude Code and MCP, build your PA with Claude Code and MCP, AI agents in production: patterns and antipatterns 2026 and WebMCP: your website ready for AI agents.