DataEngineeringandCDP:WithoutCleanDataThereIsNoAI

Snowflake · BigQuery · dbt · Segment · Airflow

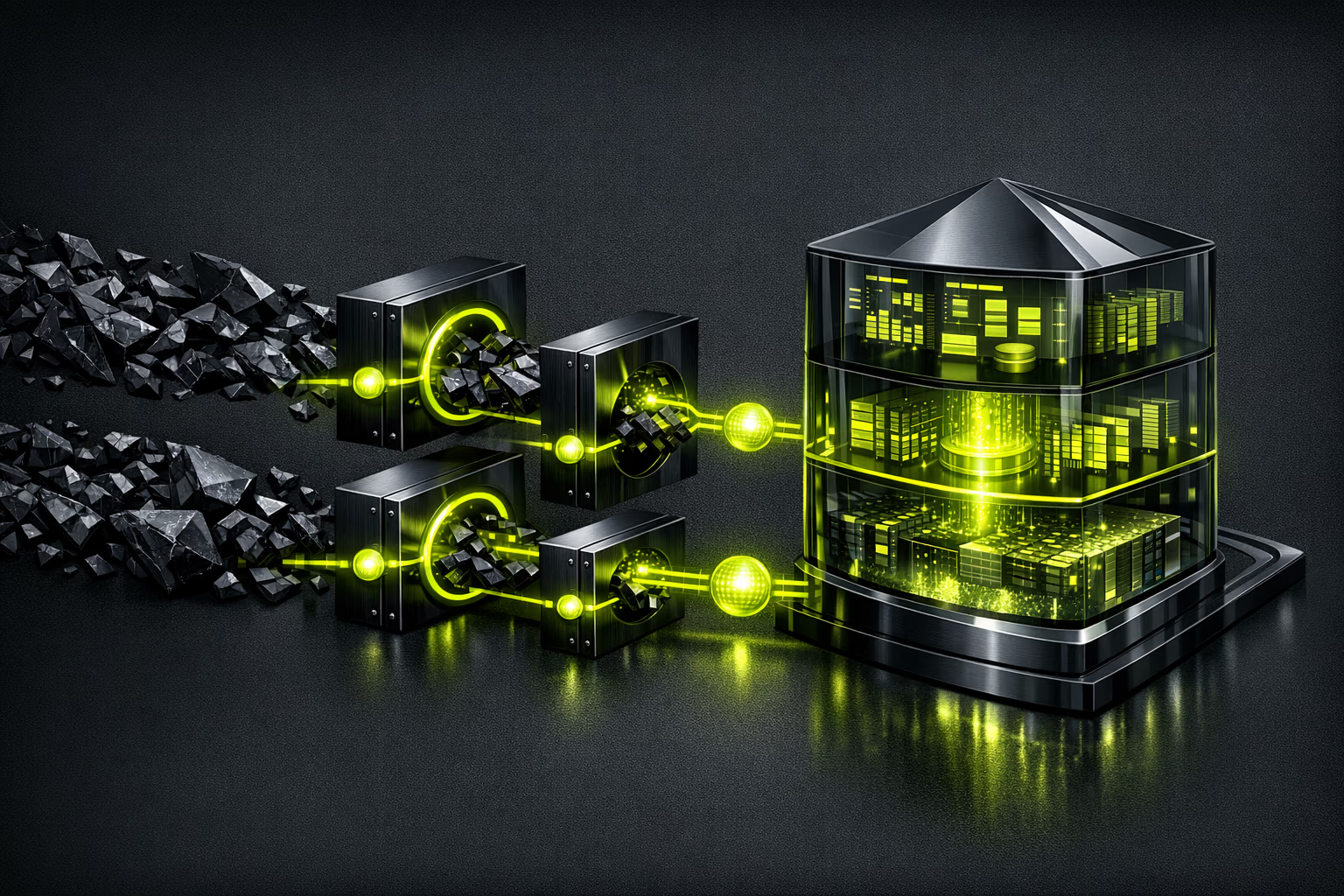

Without clean data there's no AI, no personalization, no informed decisions. Data engineering is the invisible layer that makes everything else work: pipelines, warehouses, CDPs and data quality.

Service Deliverables

End-to-end data infrastructure.

The Modern Data Stack in Action

From raw data to actionable insights.

The modern pattern is ELT (Extract, Load, Transform): you extract data from all your sources (CRM, web, app, ads), load it into a central warehouse, and transform it with dbt (data build tool). Transformations are SQL versioned in Git, testable, documented. No more fragile Python scripts that nobody understands. The result: a warehouse where any team can run reliable queries.

Executive Summary

For CEOs and data directors.

The CDP (Customer Data Platforms) market will grow from $8.26 billion in 2025 (Grand View Research) to $37.1 billion by 2030 (CAGR 30.7%, MarketsandMarkets, 2025). Data integration represents the largest investment in CDP projects, not the platform itself. Without solid data engineering, a CDP is spend with no return.

Gartner predicts that AI-powered workflows will reduce manual data management by 60% by 2027 (Gartner, 2025). But AI needs clean data to function. Investing in data engineering is investing in the infrastructure that enables all AI, analytics and personalization initiatives.

Kiwop has expertise in Python, analytics (GA4, BigQuery) and backend development. Data engineering is the infrastructure layer that connects our development, analytics and AI services into a cohesive offering.

Summary for CTO / Technical Team

Stack, tools and architecture.

Warehouses: Snowflake (multi-cloud, compute/storage separation, independent scaling), BigQuery (serverless, ideal for Google ecosystem), Databricks (lakehouse, unifies analytics and ML). Selection based on ecosystem, volume and budget.

ETL/ELT: dbt for transformations (SQL in Git, tests, auto-generated docs). Fivetran or Airbyte for ingestion (300+ connectors). Airflow or Dagster for orchestration. Everything versioned, reproducible, monitored.

CDPs: Segment (market standard, 400+ integrations), RudderStack (open-source, customer data pipeline), mParticle (enterprise, real-time audiences). Implementation includes identity resolution, consent management, and activation across channels (ads, email, CRM).

Is It Right for You?

Data engineering requires data volume and a clear use case. If your company manages data manually, it's time.

Who it's for

- Companies making decisions based on manual CSV exports and spreadsheets.

- Analytics teams that need reliable, automatically refreshed data.

- Organizations planning AI/ML initiatives that need clean data as a foundation.

- E-commerce and SaaS businesses that want to personalize experiences with unified customer data.

- Data directors who need a centralized warehouse with governance.

Who it's not for

- Very early-stage startups with little data and low volume (a CRM is enough).

- Companies without budget for cloud infrastructure (Snowflake, BigQuery have costs).

- If you only need a dashboard, no-code tools like Looker Studio may suffice.

- Organizations with nobody consuming the data (an empty warehouse = spend with no ROI).

- If your data source is a single app and you don't need to join with other sources.

Data Engineering Services

Verticals for building your data infrastructure.

Data Warehouse Design

Dimensional modeling, staging/marts schemas, partitioning and clustering. Snowflake, BigQuery or Databricks based on your ecosystem. Cost optimization built into the design.

ETL/ELT Pipelines

Ingestion with Fivetran or Airbyte (300+ connectors). Transformations with dbt (SQL in Git). Orchestration with Airflow or Dagster. Reproducible, testable pipelines.

CDP Implementation

Setup of Segment, RudderStack or mParticle. Identity resolution, GDPR consent management, and audience activation across channels (ads, email, CRM, web).

Data Quality and Observability

Automated tests with dbt tests and Great Expectations. Monitoring of freshness, completeness, schema drift. Proactive alerts before users report issues.

Real-Time Data Streaming

Real-time data pipelines with Kafka, AWS Kinesis or Google Pub/Sub. For use cases requiring <1 second latency: live personalization, fraud detection, real-time dashboards.

ML-Ready Infrastructure

Feature stores, versioned training datasets, data pipelines prepared for machine learning. The foundation for your AI team to work with clean, up-to-date data.

Implementation Process

From scattered data to centralized infrastructure.

Data Assessment

Mapping existing data sources, current quality, business requirements and use cases. Target architecture design with tool selection.

Warehouse Foundation

Setup of Snowflake/BigQuery/Databricks. Schema design (staging, intermediate, marts). Access policies and governance.

Ingestion Pipelines

Configuring connectors with Fivetran/Airbyte. First active data pipelines. Integrity validation against the source.

Transformations and Quality

dbt models for staging and business marts. Automated quality tests. Auto-generated documentation. Orchestration with Airflow.

CDP and Integrations

CDP implementation (if applicable). Identity resolution and consent management. Audience activation. Connection with analytics and BI tools.

Operations and Continuous Improvement

Pipeline monitoring, freshness alerts, warehouse cost optimization. Iteration cycles adding new sources and models.

Risks and Mitigation

The real risks of implementing data infrastructure.

Runaway warehouse costs

FinOps built in from day 1: clustering, partitioning, auto-suspend, spend alerts. Snowflake and BigQuery charge per query — we optimize every dbt model.

Poor data quality

Automated tests in every pipeline: not_null, unique, referential integrity, freshness. Great Expectations for complex validations. Bad data never reaches marts.

GDPR non-compliance

PII identified and pseudonymized in the ingestion pipeline. Consent management integrated in CDP. Retention policies and right-to-erasure automated.

Fragile pipelines that break

Orchestration with Airflow/Dagster: automatic retries, Slack alerts, circuit breakers. Tests before every deploy. Transformation rollback possible.

Empty warehouse with no users

We start with a concrete use case (dashboard, CDP audience, ML feed) — not a generic warehouse. Demonstrable value by week 4.

From Manual CSVs to an Automated Warehouse

Mid-market e-commerce with data scattered across 15 sources: Shopify, GA4, Klaviyo, Meta Ads, Google Ads, ERP, CRM, and more. The analytics team spent 2 days/week preparing data manually. We implemented BigQuery + dbt + Fivetran + Segment: automated ingestion, tested transformations, CDP with activated audiences.

A CDP Without Data Engineering = Wasted Budget

Why infrastructure comes first.

Data integration represents the largest investment in CDP projects, not the platform. Why? Because without clean data pipelines, reliable identity resolution, and tested transformations, a CDP ingests garbage and activates wrong audiences. Investing in data engineering first is the most cost-effective decision before buying any marketing or AI tool.

Frequently Asked Questions About Data Engineering

What data directors and CTOs ask.

What is a data warehouse and why do I need one?

A data warehouse is a centralized database optimized for analytics. It stores data from all your sources (CRM, web, ads, ERP) transformed and ready to query. You need one when your teams waste time preparing data manually or make decisions with outdated data.

Snowflake, BigQuery or Databricks?

Snowflake: multi-cloud, compute/storage separation, ideal for SQL-first teams. BigQuery: serverless, zero management, perfect if you already use Google Cloud and GA4. Databricks: lakehouse that unifies analytics and ML, ideal if you have a data science team. We recommend based on ecosystem and use case.

What is dbt and why is it important?

dbt (data build tool) lets you write data transformations in SQL, version them in Git, test them automatically and document them. It turns the warehouse into a software project with the same engineering practices: CI/CD, code review, tests. It's the de facto standard in the modern data stack.

How much does a data warehouse implementation cost?

Initial setup (warehouse + pipelines + first models): €30K–€60K. With CDP included: €60K–€120K. Monthly infrastructure cost: from €500 (BigQuery serverless) to €5K+ (Snowflake enterprise). Team time savings usually cover the investment in 6–12 months.

Do I need a CDP or is a warehouse enough?

A warehouse is for analytics (querying historical data). A CDP is for activation (sending audiences to channels in real time). If you only need dashboards, a warehouse is enough. If you want personalization, dynamic segmentation or audiences for ads, you need a CDP.

How long does implementation take?

Warehouse + first pipelines: 4–6 weeks. Complete stack with CDP: 10–14 weeks. Demonstrable value (first dashboard with automated data) by week 4. We iterate incrementally — we don't wait for "everything" to be ready.

How do you handle GDPR in data pipelines?

PII (personal data) is identified and pseudonymized in the ingestion pipeline, before reaching the warehouse. Consent management integrated in CDP. Automated retention policies. Right to erasure implemented as a pipeline. Documentation ready for your DPO.

What if my current data quality is poor?

That's where we start. The first phase is a quality assessment: we identify gaps, duplicates, inconsistencies. Then we implement automated tests in every pipeline. Data quality isn't achieved in one shot — it's built with processes and automation.

Can I start small and scale up?

Absolutely. We recommend starting with 3–5 data sources and a concrete use case (a dashboard, a CDP audience, an ML dataset). Demonstrable value in weeks, not months. We scale by incrementally adding sources and models.

Are Your Data Siloed and Your Teams Wasting Time Preparing Them?

Free data infrastructure assessment. We map your sources, identify quality gaps, and design the target architecture.

Request data assessment Initial technical

consultation.

AI, security and performance. Diagnosis with phased proposal.

Your first meeting is with a Solutions Architect, not a salesperson.

Request diagnosis